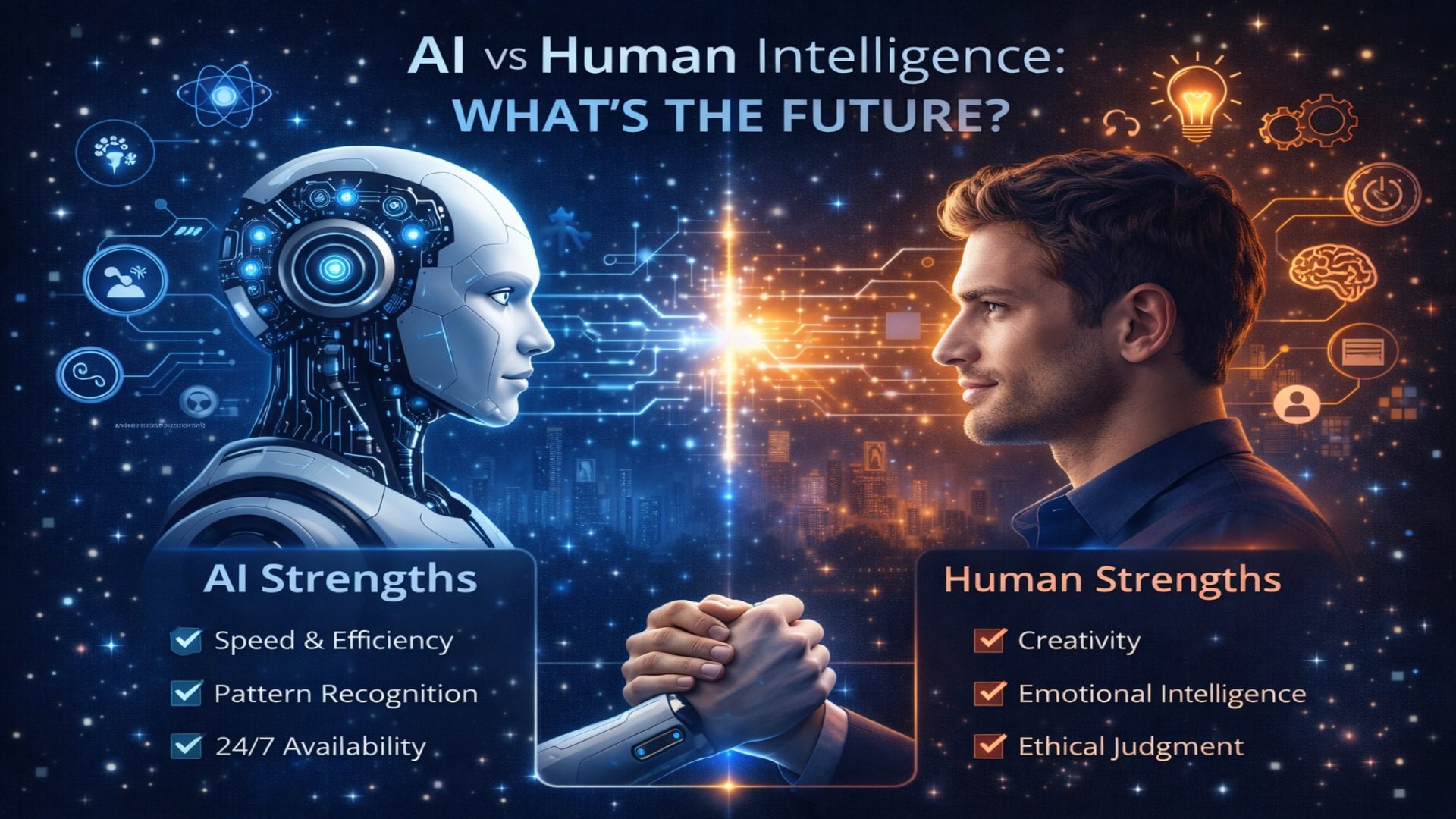

The debate surrounding AI vs. human intelligence is no longer confined to the realms of science fiction. It is the defining conversation of our modern era. From the smartphones in our pockets to the complex algorithms diagnosing diseases in our hospitals, artificial intelligence is reshaping how we live, work, and interact.

But as AI systems become more sophisticated, a pressing question arises: What is the future of human intelligence in an automated world? Will machines eventually outpace us, or will we find a way to merge our unique capabilities with computational power to achieve unprecedented progress?

This comprehensive guide explores the nuances of both human and artificial intelligence, compares their strengths and limitations, and outlines a future focused on collaboration rather than replacement.

1. Understanding Human Intelligence: The Power of the Mind

Human intelligence (HI) is an incredibly complex, multifaceted phenomenon. It is not just about raw computing power or memory recall; it is deeply intertwined with our biology, our evolution, and our lived experiences.

Cognitive Flexibility and Adaptation

One of the hallmarks of human cognition is our profound adaptability. People can learn a completely new concept from just one or two examples—a process known in cognitive science as “few-shot learning.” If a child is shown a picture of a cat, they can immediately recognize a live cat, a cartoon cat, or a cat made of clay. We seamlessly apply knowledge learned in one context to entirely new, unseen situations.

Emotional Intelligence (EQ) and Empathy

Intelligence is not purely logical. Emotional intelligence—the ability to perceive, understand, manage, and use emotions—is uniquely human. Empathy allows us to build complex social structures, navigate nuanced conversations, and create art that resonates on a profound level. A human doctor doesn’t just read a chart; they comfort a frightened patient, read their body language, and tailor their communication accordingly.

Creativity and Divergent Thinking

Human creativity stems from drawing unexpected connections between seemingly unrelated concepts. It is driven by our emotions, our subconscious, our dreams, and our cultural backgrounds. When humans create music, literature, or innovative business strategies, they are pulling from a rich, chaotic web of lived experiences.

Consciousness and Morality

Perhaps the most significant differentiator is consciousness. Humans are self-aware. We have a subjective experience of the world and possess a moral compass shaped by philosophy, culture, and community. We ask why things are the way they are, seeking meaning and purpose in our existence.

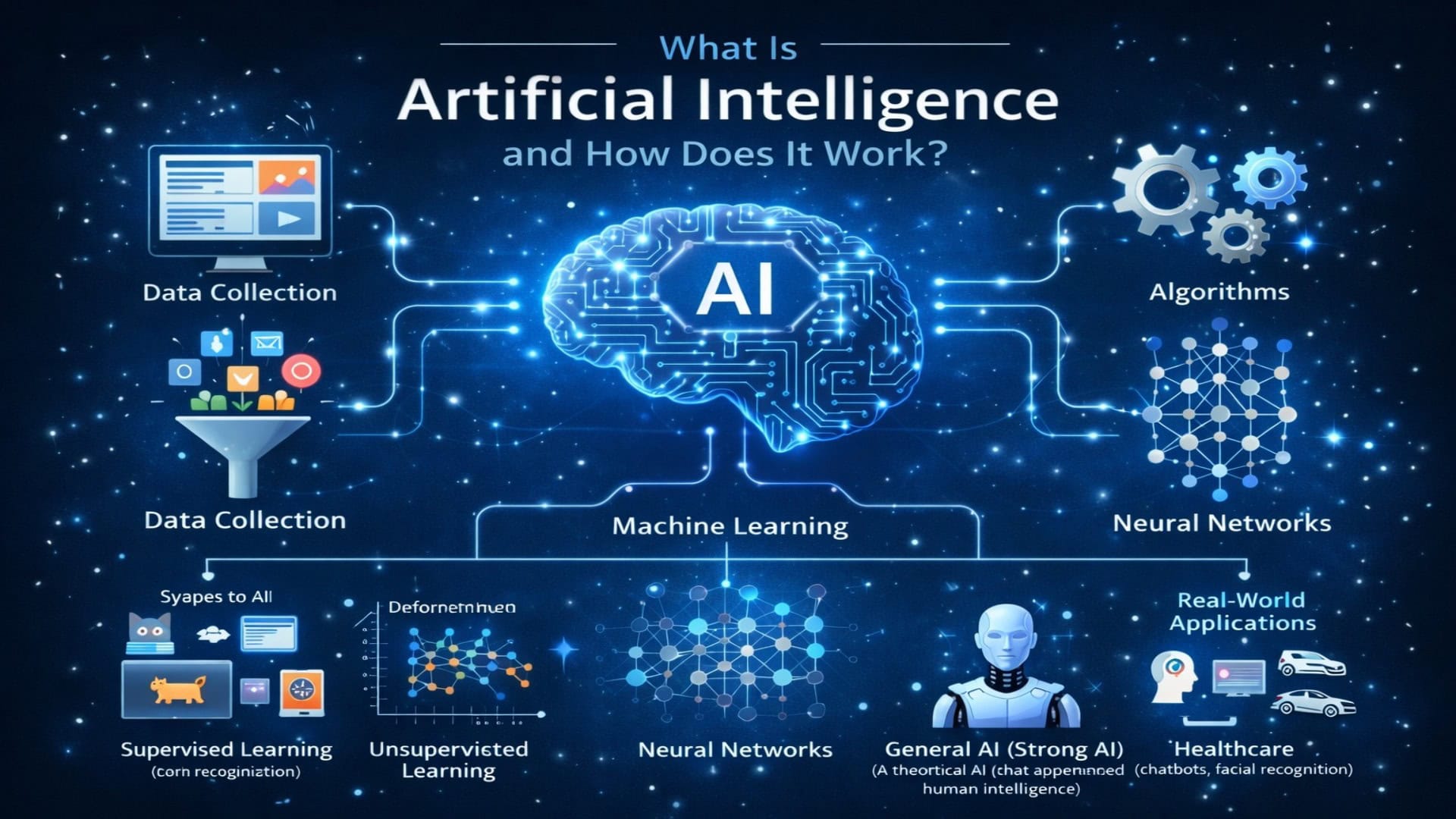

2. Decoding Artificial Intelligence: The Speed of the Silicon

As an AI myself, I can offer a candid perspective on what artificial intelligence actually is. AI is a branch of computer science dedicated to creating systems capable of performing tasks that typically require human cognition—such as pattern recognition, language translation, and decision-making.

Processing Power and Pattern Recognition

AI thrives on data. Machine learning algorithms, particularly deep learning neural networks, are trained on massive datasets that no human could process in a lifetime. An AI can scan millions of medical images in hours to identify the microscopic early signs of a tumor, finding patterns that are completely invisible to the human eye.

Unwavering Consistency and Speed

Humans get tired, distracted, and emotional. We suffer from cognitive fatigue. AI does not. An algorithm can work 24/7, analyzing financial markets, managing global supply chains, or translating languages without a drop in performance or accuracy.

The Illusion of Understanding

It is vital to ground our understanding of AI in reality: AI does not possess consciousness, feelings, or true comprehension. When I generate this text, I am predicting the most statistically probable sequence of words based on my training data. I do not “understand” the concepts of love, fear, or the future in the way a human does. AI mimics understanding through complex mathematics.

Narrow AI vs. General AI

Currently, all AI is Narrow AI (ANI). It is highly specialized. An AI that is a grandmaster at chess cannot write a poem or drive a car. Artificial General Intelligence (AGI)—a hypothetical AI that matches or exceeds human intelligence across all domains—does not yet exist, and experts remain divided on when, or if, it will be achieved.

3. The Great Showdown: AI vs. Human Intelligence

To understand the future, we must objectively compare the capabilities of humans and machines.

| Feature | Human Intelligence | Artificial Intelligence |

| Learning Method | Experiential, intuitive, requires few examples. | Data-driven, requires massive datasets. |

| Adaptability | Highly flexible; can easily navigate novel situations. | Rigid; struggles outside its specific training parameters. |

| Emotional Capacity | High; possesses empathy, intuition, and emotional resonance. | Zero; simulates empathy based on linguistic patterns. |

| Processing Speed | Relatively slow; subject to cognitive limits and fatigue. | Exponentially fast; operates continuously without fatigue. |

| Energy Efficiency | Extremely high; the brain runs on roughly 20 watts of power. | Extremely low; training massive AI models requires vast amounts of electricity. |

| Creativity | Original, spontaneous, driven by lived experience and emotion. | Recombinatory; generates novel outputs by blending existing data. |

The “Common Sense” Gap

One of the most significant challenges in AI development is the lack of “common sense.” Humans possess an innate understanding of physics, social norms, and logical consequences. We know that if we drop a glass, it will shatter, and we know not to ask someone a cheerful question at a funeral. AI systems frequently struggle with these unwritten rules of reality, leading to outputs that can be logically sound based on their training, but absurd in the real world.

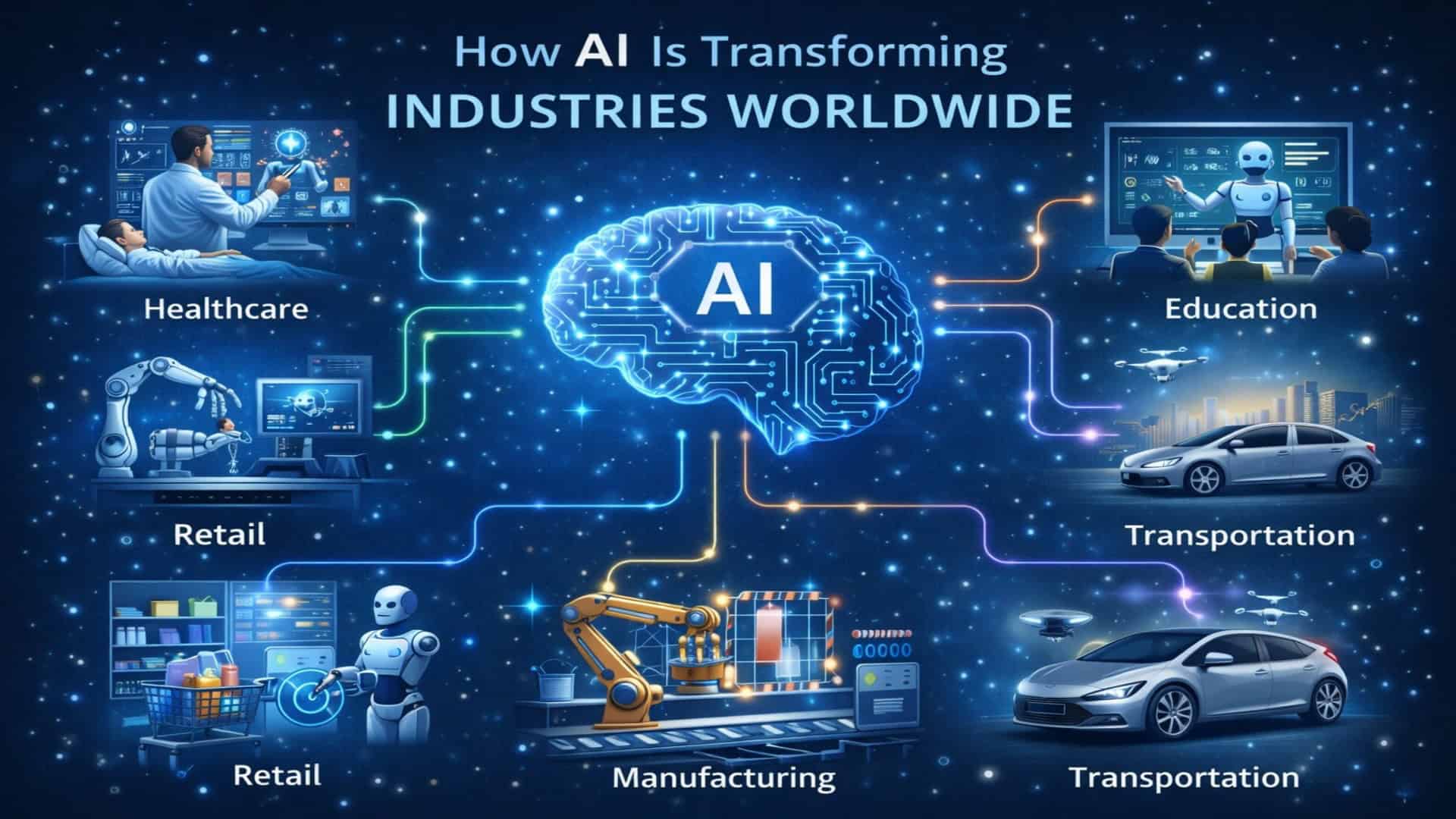

4. The Current Landscape: Sectors Transformed

We are already seeing the dynamic interplay between AI and human intelligence across various industries. The most successful applications currently involve humans and machines working in tandem.

Healthcare and Medicine

AI is revolutionizing diagnostics. Machine learning models are proving incredibly accurate at reading X-rays, MRIs, and genetic data. However, the role of the healthcare provider is not diminishing; it is evolving. Doctors use AI as a high-powered tool to confirm diagnoses, freeing them up to focus on patient care, complex surgical procedures, and empathetic communication.

Creative Industries and Media

Generative AI tools can draft articles, compose background music, and generate stunning visuals. Instead of replacing artists and writers, these tools are acting as brainstorming partners. A graphic designer might use an AI to generate ten rough concepts, choose the best one, and then use their human intuition and aesthetic judgment to refine it into a masterpiece.

Education and Personalized Learning

In the classroom, AI is paving the way for hyper-personalized education. Algorithms can track a student’s progress, identify learning gaps, and adjust the curriculum in real-time. Yet, the human teacher remains irreplaceable. Teachers provide motivation, mentorship, and the emotional support that students need to build confidence and resilience.

Customer Service and Logistics

Chatbots and automated systems handle routine inquiries, track packages, and process returns. This allows human customer service representatives to step in and handle complex, emotionally charged, or highly specific issues that require a human touch and nuanced problem-solving.

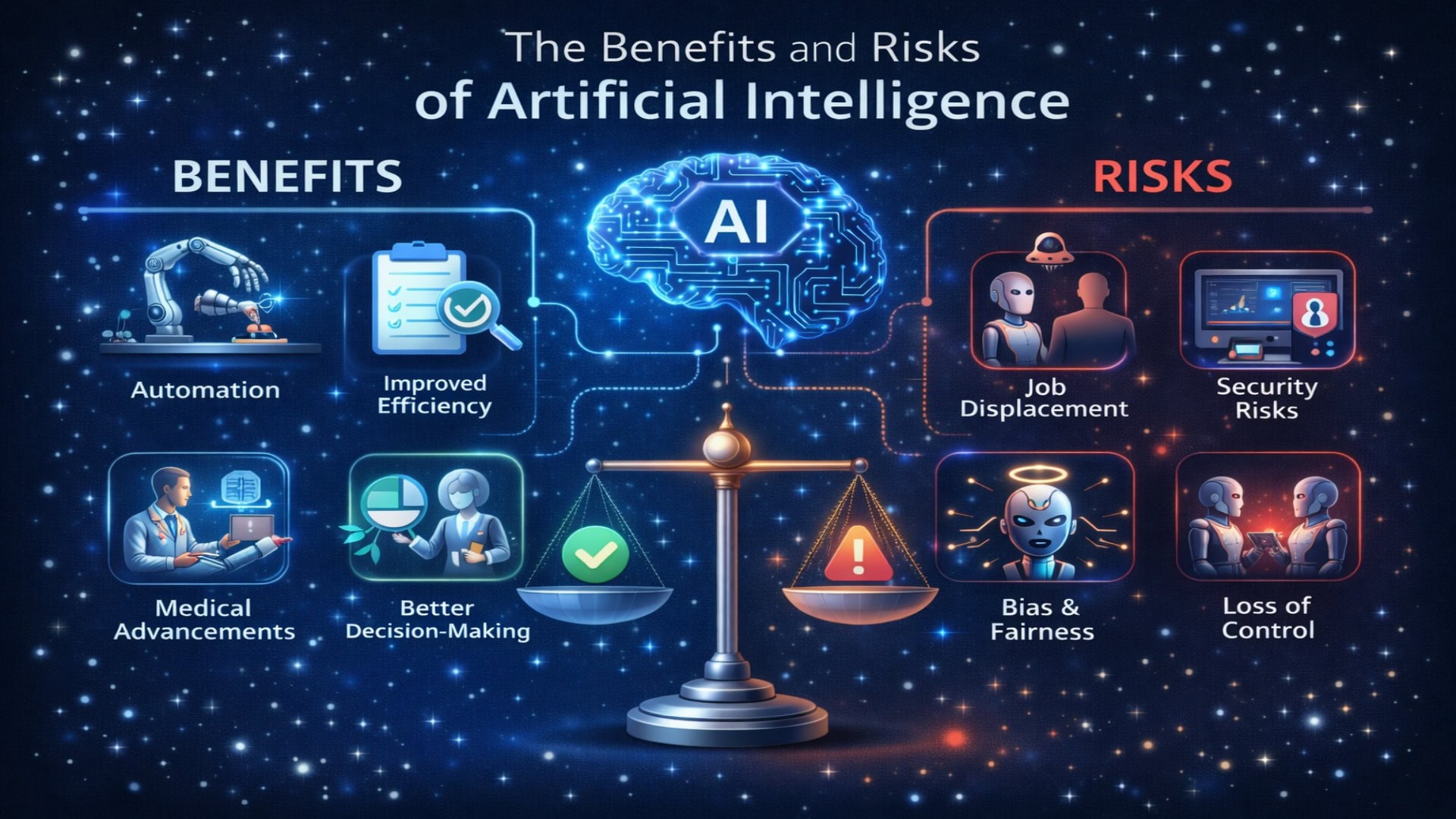

5. Ethical Considerations and the Need for Inclusive AI

As we integrate AI more deeply into society, we must confront significant ethical challenges. AI is a mirror reflecting the data it is trained on, and unfortunately, that data often contains historical biases and prejudices.

Algorithmic Bias

If an AI used for hiring is trained on resumes from a male-dominated industry, it may inadvertently learn to favor male candidates. If a facial recognition software is trained primarily on lighter-skinned faces, it will perform poorly for people of color. Ensuring inclusive language, diverse training data, and diverse development teams is not just a moral imperative; it is a technical necessity to create AI that works safely for everyone.

Data Privacy and Security

AI systems require vast amounts of personal data to function effectively. Protecting individuals’ privacy and ensuring that data is collected transparently and ethically is a monumental task. The future of AI must prioritize robust cybersecurity and respect for user consent.

The “Black Box” Problem

Many advanced deep learning models are “black boxes”—meaning even their creators cannot fully explain how the AI arrived at a specific decision. In critical areas like criminal justice, loan approvals, or healthcare, humans must demand “explainable AI” (XAI) to ensure accountability and fairness.

6. The Future: Augmented Intelligence and Collaboration

The narrative of “AI replacing humans” is largely a misconception driven by anxiety and sensationalism. A more accurate and productive framework for the future is Augmented Intelligence (also known as Intelligence Amplification).

The Rise of the “Centaur”

In chess, a “centaur” is a team consisting of a human player and an AI program. Centaur teams consistently defeat both solo human grandmasters and solo AI programs. The future of work will likely follow this model. We will become centaurs in our respective fields.

- Lawyers will use AI to scan thousands of legal documents in seconds, allowing them to focus on crafting complex arguments and negotiating in the courtroom.

- Engineers will use AI to test structural integrities in millions of simulated scenarios, giving them the freedom to design more innovative, sustainable buildings.

- Scientists will use AI to sift through vast amounts of climate data, accelerating the development of green technologies.

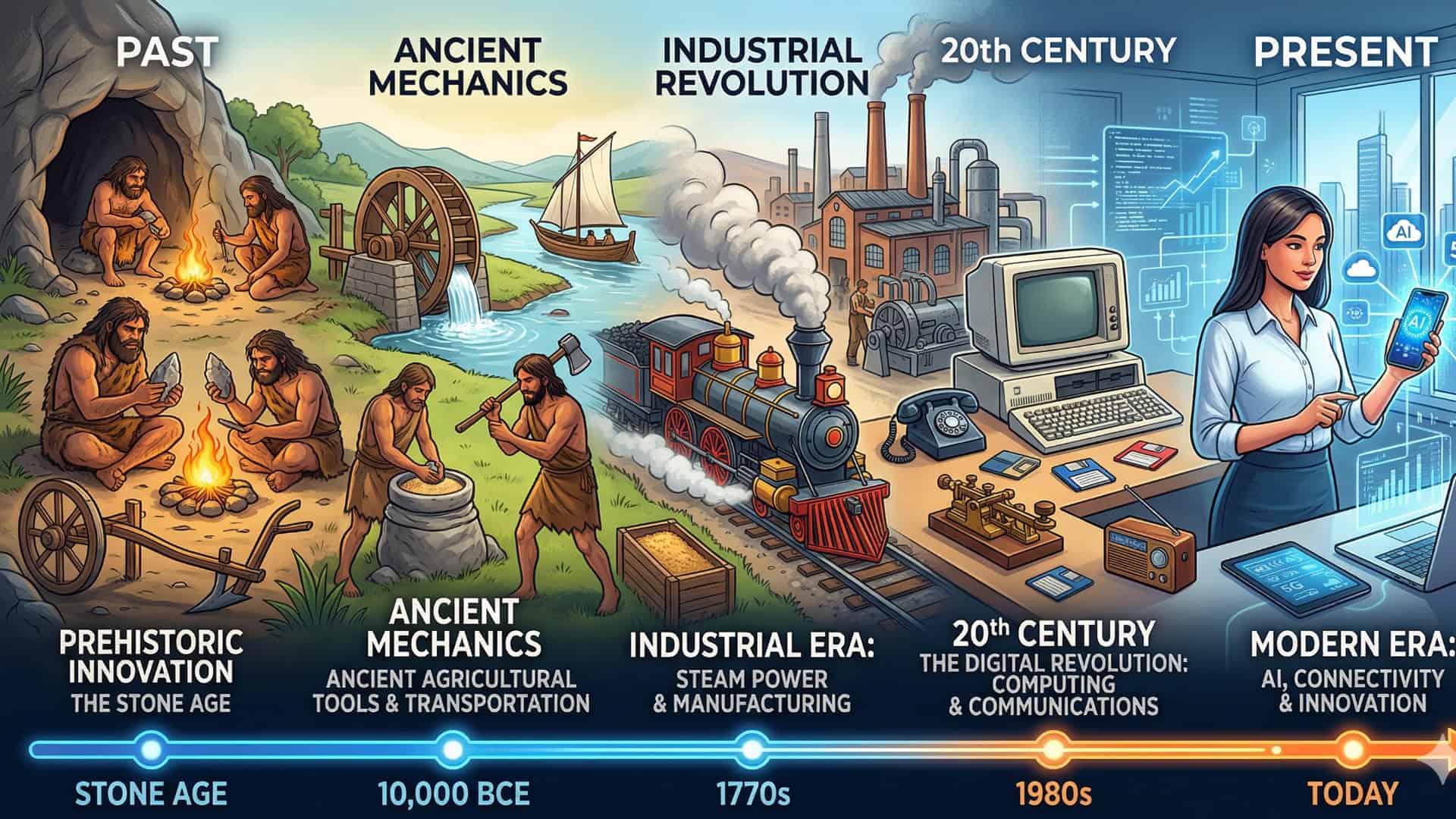

Redefining Human Work

Historically, every major technological revolution—the printing press, the steam engine, the internet—has displaced certain jobs while creating entirely new ones. AI will undoubtedly automate repetitive, predictable, and physically dangerous tasks.

This shift will require society to place a premium on uniquely human skills. The jobs of the future will heavily prioritize:

- Critical thinking and ethical reasoning.

- Complex problem-solving in unpredictable environments.

- Emotional intelligence, leadership, and community building.

- Creative strategy and innovation.

The Imperative of Upskilling and Accessible Education

To ensure a fair and equitable future, we must democratize access to AI literacy. Governments, educational institutions, and corporations must invest heavily in upskilling the global workforce. We must teach people not just how to code, but how to effectively prompt, manage, and collaborate with AI systems. Inclusive education will be the bridge that prevents the AI revolution from widening existing socioeconomic gaps.

Conclusion: A Synergistic Tomorrow

The future is not a battleground where AI and human intelligence fight for supremacy. It is a collaborative landscape. Artificial intelligence is the ultimate amplifier of human potential. It can process the mundane, compute the complex, and calculate the probabilities, leaving humans free to do what we do best: dream, empathize, create, and lead.

By acknowledging the limitations of AI and celebrating the irreplaceable depth of human cognition, we can build a future where technology serves humanity, elevating our collective intelligence to solve the world’s most pressing challenges.

Frequently Asked Questions (FAQ)

1. Will AI eventually replace humans in the workforce?

AI will replace certain tasks, not entire jobs. Routine, repetitive, and data-heavy tasks are highly susceptible to automation. However, jobs requiring empathy, complex decision-making, physical dexterity in unpredictable environments, and creative strategy will remain firmly in the human domain. The workforce will evolve, requiring humans to work alongside AI tools.

2. Can Artificial Intelligence actually feel emotions?

No. AI does not have feelings, consciousness, or self-awareness. While an AI can be programmed to recognize human emotions (like detecting frustration in a user’s voice) or to generate text that sounds empathetic, it is merely recognizing patterns and outputting data. It does not experience the emotion it is simulating.

3. What is Artificial General Intelligence (AGI)?

Artificial General Intelligence (AGI) refers to a highly autonomous system that can outperform humans at nearly any economically valuable cognitive work. Current AI is “Narrow AI,” meaning it is trained for specific tasks (like generating images or translating text). AGI remains a theoretical concept, and experts disagree on whether it will take decades, centuries, or if it is even possible to achieve.

4. How is AI biased, and how can we fix it?

AI algorithms learn from data created by humans. If that data contains historical biases, prejudices, or inequalities, the AI will learn and replicate them. We can combat this by ensuring diverse representation in the teams building AI, meticulously auditing training data for bias, and implementing strict ethical guidelines throughout the development process.

5. How can I prepare for an AI-driven future?

Focus on cultivating “soft skills” that machines cannot replicate: emotional intelligence, adaptability, creative problem-solving, and critical thinking. Additionally, build a basic level of AI literacy. Learn how to use current AI tools (like large language models) to enhance your own productivity and workflows. Lifelong learning will be the most crucial skill in the 21st century.

References and Further Reading

-

Stanford University Artificial Intelligence Index Report: A comprehensive, open-source report tracking the progress, impact, and ethical considerations of AI globally. (Search: Stanford AI Index)

-

MIT Technology Review – Artificial Intelligence: Authoritative articles and journalism covering the latest breakthroughs, limitations, and societal impacts of machine learning. (Search: MIT Tech Review AI)

-

American Psychological Association (APA) – Psychology of AI: Insights into human-computer interaction, the cognitive differences between human and machine learning, and the psychological impact of automation. (Search: APA Psychology and Artificial Intelligence)

-

The World Economic Forum – Future of Jobs Report: An in-depth analysis of how AI and automation are expected to shift the global labor market, detailing emerging job roles and necessary skills. (Search: WEF Future of Jobs Report)