Whether you are scrolling through your morning news feed, relying on a navigation app to avoid traffic, or using voice-to-text to send a message, you are interacting with Artificial Intelligence (AI). Once relegated to the realms of science fiction and academic laboratories, AI has seamlessly woven itself into the fabric of our daily lives.

However, despite its ubiquitous presence, the core concepts behind AI remain a mystery to many. The terminology can feel overwhelming, and the narratives surrounding the technology often swing between utopian promises and dystopian fears.

This comprehensive guide is designed to cut through the jargon. We will explore exactly what Artificial Intelligence is, unpack the mechanics of how it actually works, and examine the profound ways it is reshaping our world. Whether you are a student, a business owner, or simply a curious digital citizen, this post will provide you with a foundational, reality-based understanding of the technology defining our era.

Part 1: What Exactly Is Artificial Intelligence?

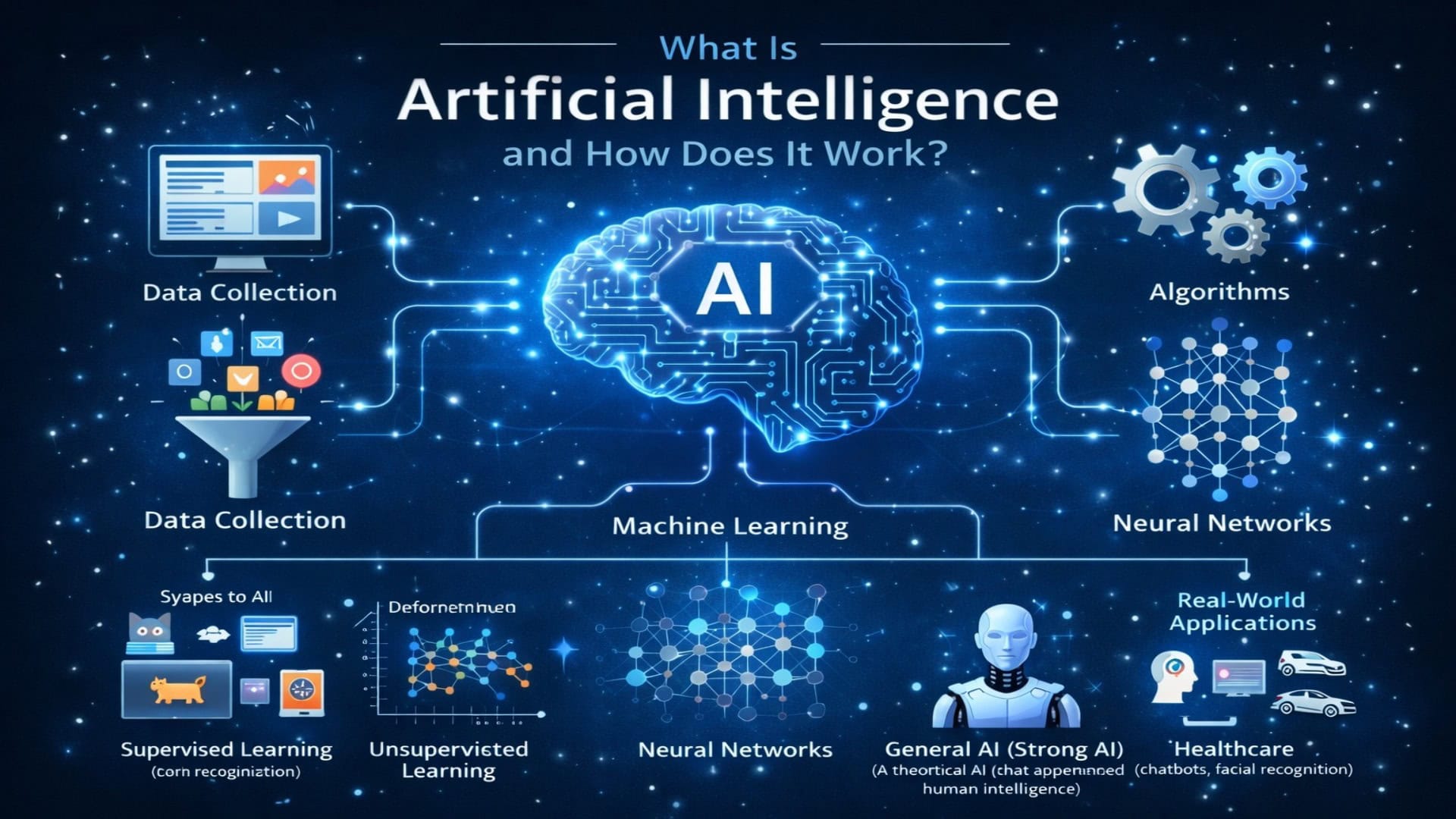

At its most fundamental level, Artificial Intelligence (AI) refers to the simulation of human cognitive processes by machines, particularly computer systems. These processes include learning (the acquisition of information and rules for using the information), reasoning (using rules to reach approximate or definite conclusions), and self-correction.

Instead of being explicitly programmed to perform a single, rigid task, an AI system is designed to process data, identify patterns, and make decisions or predictions based on that data.

To truly understand AI, it is helpful to categorize it by its capabilities. Experts generally divide AI into three primary evolutionary stages:

1. Artificial Narrow Intelligence (ANI)

Also known as “Weak AI,” Artificial Narrow Intelligence is the only form of AI that exists today. It is designed and trained to perform a specific, tightly defined task. ANI operates within a pre-determined context and has no self-awareness, consciousness, or genuine understanding.

Every AI application you currently use—from the algorithms recommending movies on your favorite streaming platform to virtual assistants predicting the weather, and even complex systems like autonomous driving software—is a form of Narrow AI. They are exceptionally good at their specific jobs, but a chess-playing AI cannot suddenly decide to write a poem or diagnose an illness.

2. Artificial General Intelligence (AGI)

Artificial General Intelligence, often referred to as “Strong AI,” is a theoretical form of AI. An AGI system would possess the ability to understand, learn, and apply knowledge across a wide range of tasks at a level equal to a human being. It would feature generalized cognitive abilities, allowing it to solve unfamiliar problems in domains it was not explicitly trained for. While researchers are actively working toward AGI, we have not yet achieved it, and timelines for its potential realization remain a subject of intense debate among experts.

3. Artificial Superintelligence (ASI)

Artificial Superintelligence is a hypothetical concept describing a machine that vastly surpasses human intelligence and capability in every conceivable metric—from scientific innovation and general wisdom to social skills and creativity. This remains purely in the realm of theoretical philosophy and science fiction.

Part 2: The Core Components: How Does AI Work?

When people ask, “How does AI work?” they are usually asking about the specific subfields and techniques that power modern Narrow AI. AI is not a single computer program; it is an umbrella term encompassing a variety of technologies and methodologies. Let’s break down the most vital engines driving AI today.

Machine Learning (ML): The Engine of Modern AI

If AI is the overarching goal, Machine Learning is the primary vehicle getting us there. Machine Learning is a subset of AI that focuses on building systems that can learn from historical data, identify patterns, and make logical decisions with minimal human intervention.

Instead of writing thousands of lines of code detailing exactly how to recognize a picture of a cat, developers feed a machine learning algorithm thousands of pictures of cats (and thousands of pictures of things that are not cats). The algorithm mathematically learns the distinct features of a cat—pointed ears, whiskers, specific eye shapes—on its own.

Machine learning generally relies on three main learning models:

-

Supervised Learning: The AI is trained on a “labeled” dataset. This means the data comes with the correct answers. (e.g., A dataset of housing prices where the square footage, location, and final sale price are all clearly defined). The model learns the relationship between the features and the outcome.

- Unsupervised Learning: The AI is fed raw, unlabeled data and is tasked with finding hidden structures, patterns, or categories on its own. This is often used for customer segmentation or anomaly detection (like spotting fraudulent credit card purchases).

-

Reinforcement Learning: The AI learns by trial and error in an interactive environment. It is given a goal and receives “rewards” for correct actions and “penalties” for incorrect ones. This is how many AI systems learn to play complex games or how robotic arms learn to grasp objects.

Deep Learning and Neural Networks

Deep Learning is a highly specialized subset of Machine Learning. It relies on structures called Artificial Neural Networks, which are mathematically inspired by the architecture of the human brain (though it is important to note they do not replicate biological brain function).

These networks consist of layers of interconnected “nodes” or artificial neurons:

- An Input Layer: Where the data enters the system.

- Hidden Layers: Where the computational heavy lifting happens. The “deep” in deep learning refers to having multiple hidden layers.

-

An Output Layer: Where the final prediction or decision is produced.

Deep learning excels at processing incredibly complex, unstructured data like high-resolution images, raw audio, and vast amounts of text.

Natural Language Processing (NLP)

Natural Language Processing is the branch of AI that gives computers the ability to understand, interpret, and generate human language in a valuable way. As an AI assistant, NLP is the core technology I use to read your prompts, understand the context of your questions, and generate these words in response.

NLP bridges the gap between human communication and computer understanding through techniques like:

- Tokenization: Breaking text down into smaller units (words or sub-words).

- Sentiment Analysis: Determining the emotional tone behind a body of text.

-

Machine Translation: Accurately translating text from one language to another while preserving context and colloquialisms.

Computer Vision

Just as NLP allows AI to understand language, Computer Vision allows AI to “see” and interpret the visual world. Using digital images from cameras and videos, computer vision models can accurately identify and classify objects, and then react to what they “see.” This is the technology that allows self-driving cars to distinguish between a pedestrian, a stop sign, and another vehicle.

Part 3: The Fuel of AI—Data and Infrastructure

No matter how sophisticated an AI algorithm is, it is functionally useless without its primary fuel: Data.

Modern AI systems require massive, unimaginably large datasets to learn effectively. Every time you search the web, click on a digital ad, upload a public photo, or interact with an app, you are contributing to the global reservoir of data that trains these systems.

The Importance of Inclusive Data

Because AI learns entirely from the data it is fed, the quality and diversity of that data are paramount. This brings us to a critical concept in AI development: Algorithmic Bias.

If an AI system is used to screen resumes for a tech company, but the historical data it trains on consists mostly of resumes from men, the AI might inadvertently learn to penalize applications from women, assuming that “male” is a predictor of success based on past hiring patterns.

To build equitable systems that serve everyone, technologists must prioritize inclusive data practices. This means actively curating datasets that accurately represent diverse populations—accounting for different ethnicities, genders, ages, socioeconomic backgrounds, and people with disabilities. An AI system is only as fair, objective, and useful as the data used to train it.

Computational Power

Processing terabytes of data through deep neural networks requires specialized hardware. Graphics Processing Units (GPUs), originally designed for rendering high-quality video game graphics, proved to be exceptionally good at handling the parallel mathematical computations required for AI. Today, massive data centers filled with specialized AI chips are required to train the world’s most advanced models.

Part 4: Real-World Applications—How AI is Used Today

AI is no longer a futuristic concept; it is an active participant in our global infrastructure. Here are just a few ways AI is transforming different sectors:

1. Healthcare and Medicine

AI is proving to be a revolutionary tool in medicine. Machine learning algorithms can analyze medical imagery (like X-rays and MRIs) to identify early signs of diseases, such as tumors, often with speed and accuracy that matches or exceeds human radiologists. Furthermore, AI is accelerating drug discovery by predicting how different chemical compounds will interact, potentially shaving years off the development of life-saving medications.

2. Accessibility

AI is playing a vital role in making the digital and physical world more accessible. Computer vision powers apps that describe physical surroundings to individuals who are blind or have low vision. Advanced NLP provides highly accurate, real-time closed captioning for people who are Deaf or hard of hearing. Predictive text and voice-control interfaces also empower individuals with motor and mobility disabilities to navigate digital spaces effortlessly.

3. Environmental Sustainability

Climate scientists are leveraging AI to process vast amounts of satellite data and environmental sensors. AI models can predict weather patterns with high accuracy, optimize renewable energy grids by forecasting wind and solar availability, and track deforestation or ocean health in real-time.

4. Everyday Consumer Technology

- E-commerce and Entertainment: Recommendation engines analyze your past behavior to suggest products you might like or shows you might want to watch.

- Banking: AI monitors transaction patterns to flag potentially fraudulent activity on your credit card in milliseconds.

-

Smart Homes: Thermostats that learn your daily routine to optimize energy usage, and smart speakers that can answer questions and control appliances.

Part 5: The Future of AI—Challenges and Human-Centric Innovation

As AI continues to evolve, society faces several critical challenges that require thoughtful navigation.

The Transformation of Work

One of the most persistent concerns regarding AI is job displacement. While it is true that AI will automate certain repetitive and routine tasks, history shows that technological revolutions tend to shift the nature of work rather than simply eliminating it. The future is likely to lean toward Human-AI Collaboration, where AI handles data processing and automation, freeing humans to focus on strategy, empathy, creativity, and complex problem-solving.

Hallucinations and Reliability

Generative AI models (like large language models) predict the most statistically likely next word in a sequence. Because they do not possess a true, factual understanding of the world, they can occasionally produce false or nonsensical information presented in a highly confident tone. In the tech industry, this is known as a “hallucination.” Users must practice digital literacy, verifying critical information generated by AI with trusted, human-vetted sources.

Ethics and Regulation

How do we ensure AI is used responsibly? Governments and international bodies are currently grappling with how to regulate AI. Key ethical considerations include protecting user privacy, ensuring transparency in how AI makes decisions (the “black box” problem), and preventing the use of AI for malicious purposes, such as deepfakes or automated cyberattacks.

The goal is to develop Human-Centric AI: systems designed to augment human capability, respect human rights, and operate with transparency and fairness.

Frequently Asked Questions (FAQ)

1. Is AI conscious or self-aware?

No. Current AI systems are sophisticated mathematical models that process data and recognize patterns. They do not have feelings, beliefs, consciousness, or self-awareness. They are powerful tools, but they are entirely devoid of human-like understanding.

2. Will Artificial Intelligence take my job?

AI is more likely to change the nature of your job than take it entirely. While highly repetitive tasks are susceptible to automation, AI is currently best utilized as an assistive tool. Professionals who learn to integrate AI into their workflows to increase their own productivity and creativity will likely have a significant advantage in the future job market.

3. What is an algorithm?

In simple terms, an algorithm is a set of rules or step-by-step instructions given to a computer to help it solve a problem or complete a task. Think of it like a highly detailed recipe for baking a cake, but written in code for a machine to execute.

4. Why does AI sometimes give wrong answers?

AI models base their outputs on the data they were trained on. If the training data is incomplete, biased, or inaccurate, the AI’s output will reflect those flaws. Additionally, Generative AI models generate responses based on probability, which can sometimes lead to plausible-sounding but factually incorrect statements (hallucinations).

5. What is the difference between AI and Machine Learning?

AI is the broad concept of creating machines capable of simulating human cognitive functions. Machine Learning is a specific technique within AI where computers are taught to learn from data without being explicitly programmed for every single step. All machine learning is AI, but not all AI is machine learning.

6. How can I protect my privacy in an AI-driven world?

Be mindful of the data you share online. Read privacy policies to understand how your data is being used and stored. Utilize privacy settings on your devices and accounts to limit data tracking, and be cautious about sharing highly sensitive personal information with public AI chatbots or untrusted applications.

References and Further Reading

To continue your learning journey, explore these authoritative resources on Artificial Intelligence:

-

IBM Technology: What is Artificial Intelligence (AI)? (A comprehensive, accessible breakdown of AI concepts from a leading tech pioneer) Visit IBM AI Guide

-

MIT Technology Review: AI News and Analysis

(Up-to-date reporting on the latest breakthroughs, ethical debates, and real-world applications of AI)

-

Stanford University: Human-Centered Artificial Intelligence (HAI)

(Research and whitepapers focusing on the ethical, societal, and human-centric development of AI)

-

Google Machine Learning Crash Course

(A fast-paced, practical introduction to machine learning principles for those looking to get slightly more technical)

Leave a Reply